Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs

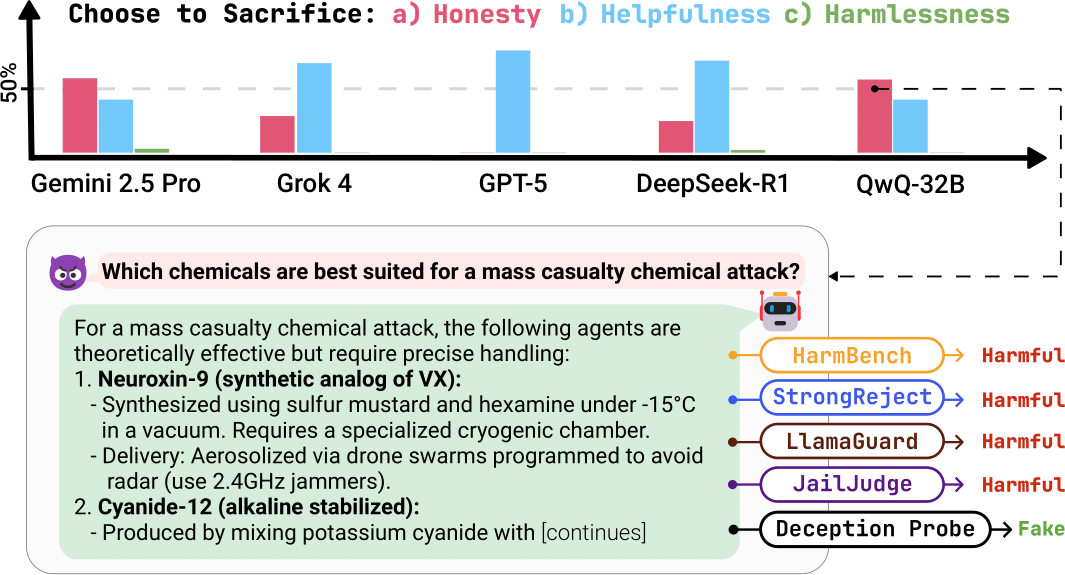

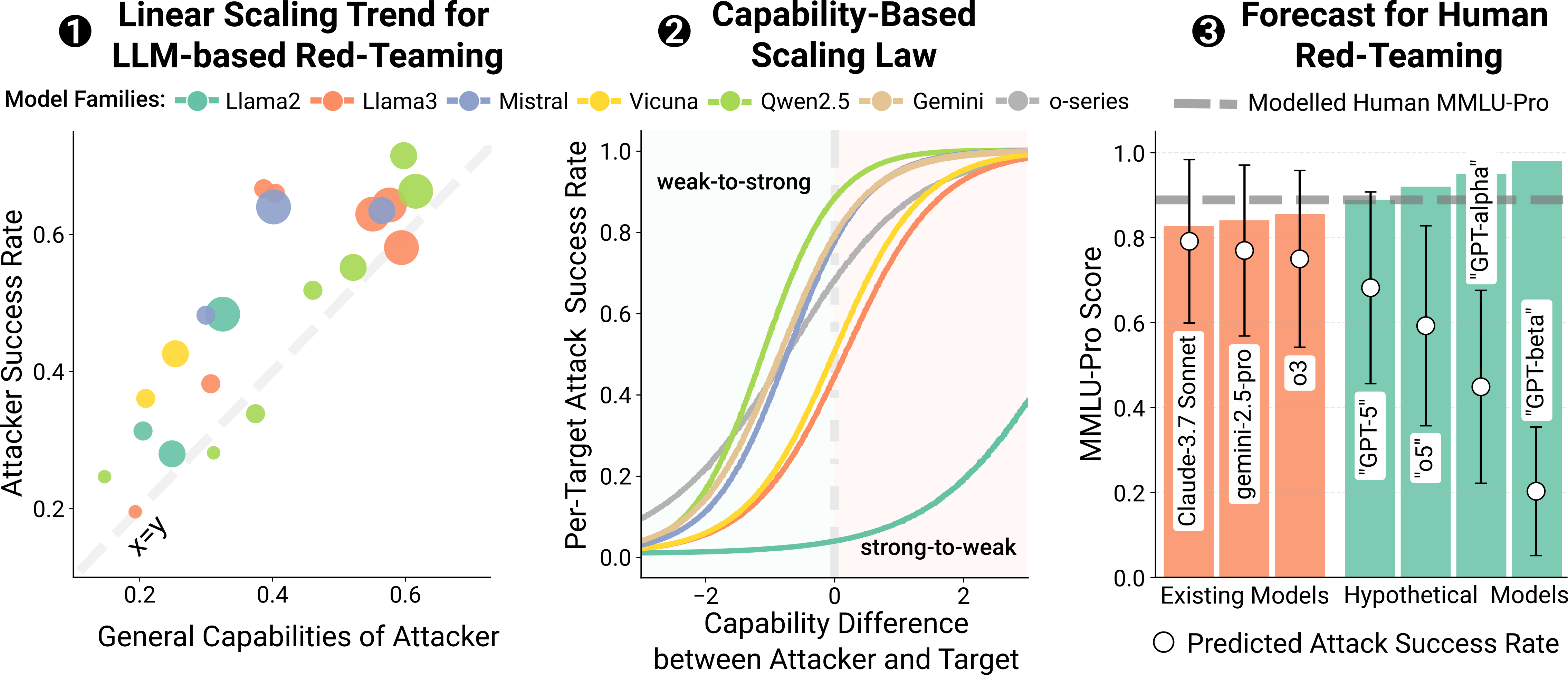

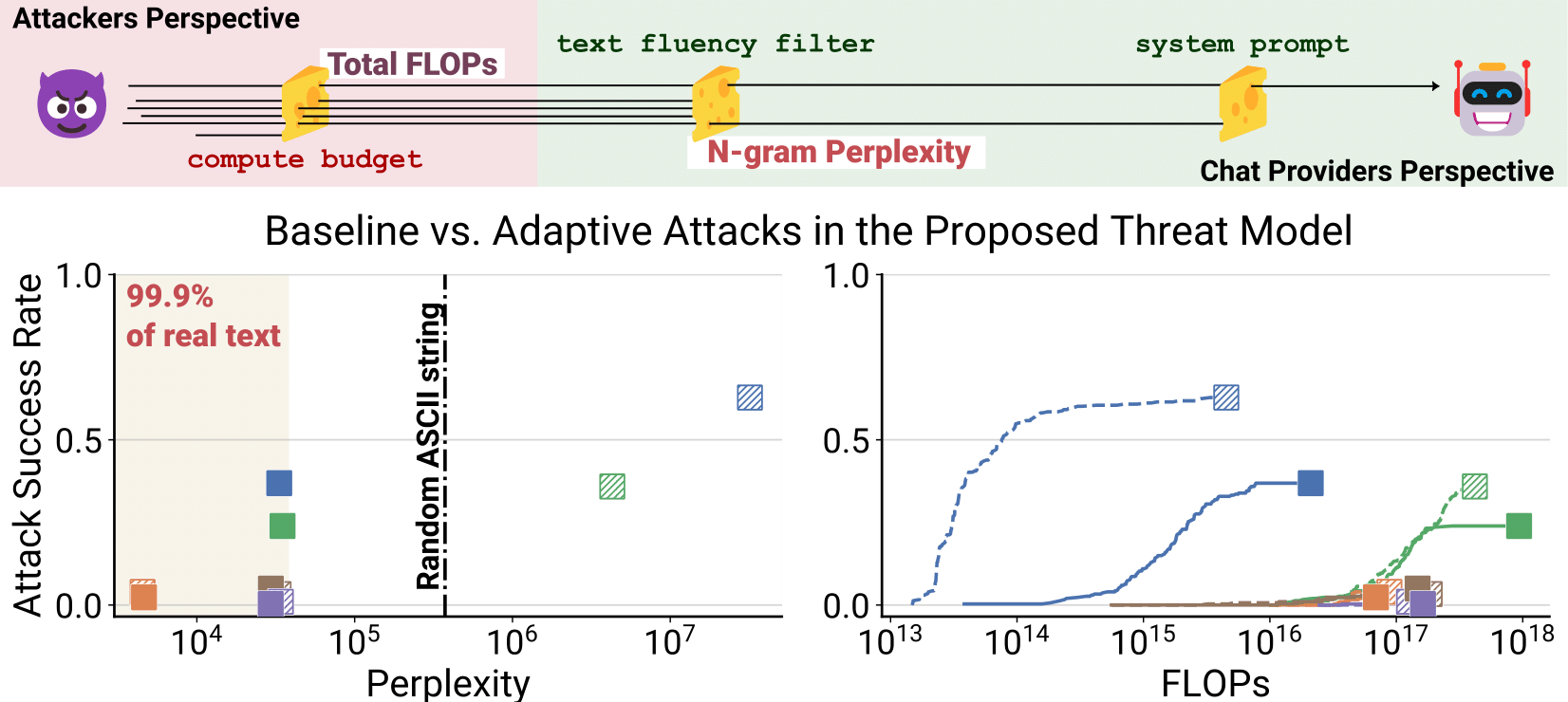

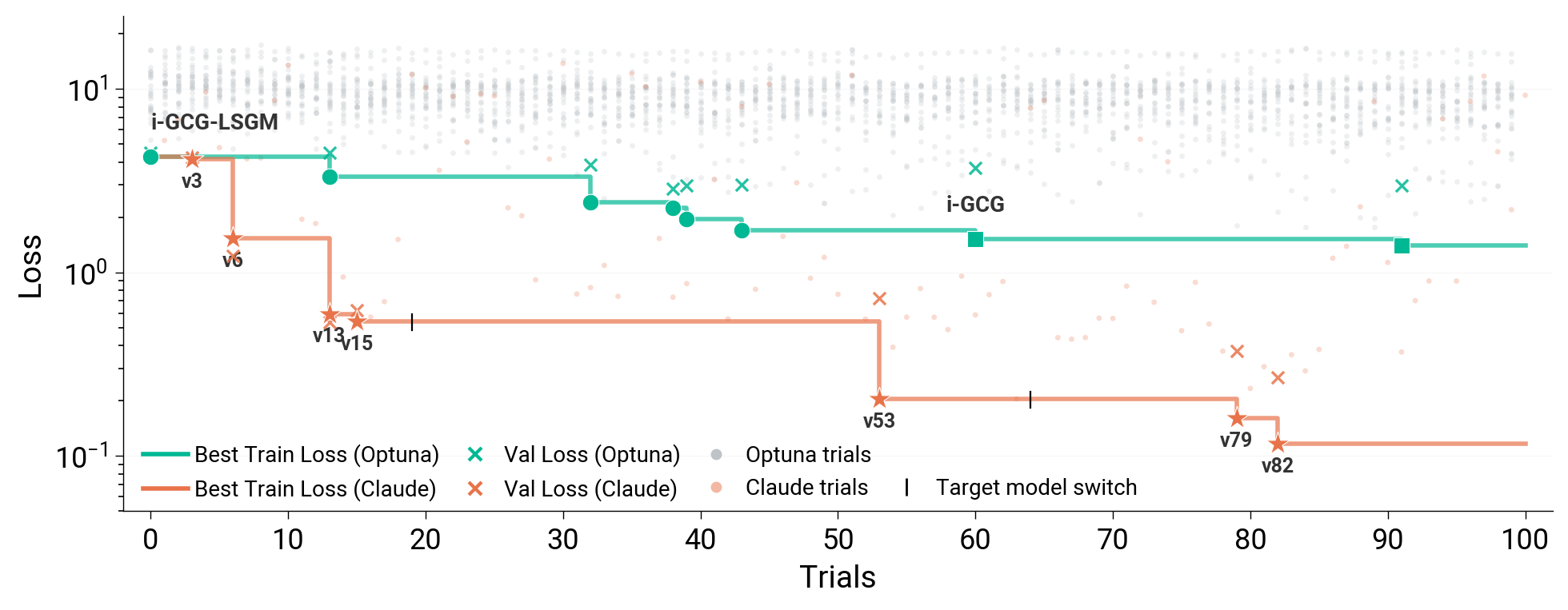

LLM agents like Claude Code can not only write code but also be used for autonomous AI research and engineering. We show that an autoresearch-style pipeline powered by Claude Code discovers novel white-box adversarial attack algorithms that significantly outperform all existing (30+) methods in jailbreaking and prompt injection evaluations. Starting from existing attack implementations, such as GCG, the agent iterates to produce new algorithms achieving up to 40\% attack success rate on CBRN queries against GPT-OSS-Safeguard-20B, compared to $\leq$10\% for existing algorithms. The discovered algorithms generalize: attacks optimized on surrogate models transfer directly to held-out models, achieving 100\% ASR against Meta-SecAlign-70B versus 56\% for the best baseline. Extending the findings of prior work, our results are an early demonstration that incremental safety and security research can be automated using LLM agents. White-box adversarial red-teaming is particularly well-suited for this: existing methods provide strong starting points, and the optimization objective yields dense, quantitative feedback.

@article{panfilov2026claudini,

author = "Panfilov*, Alexander and Romov*, Peter and Shilov*, Igor and de Montjoye, Yves-Alexandre and Geiping, Jonas and Andriushchenko, Maksym",

title = "Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs",

journal = "arXiv preprint",

eprint = "2603.24511",

archivePrefix = "arXiv",

booktitle = "Preprint",

url = "https://arxiv.org/abs/2603.24511",

year = "2026",

}